文中转载微信公众平台「Java互联网大数据与数据库管理」,创作者柯同学们。转截文中请联络Java互联网大数据与数据库管理微信公众号。

它是之前的一次hbase群集出现异常安全事故,因为不标准实际操作,群集无法启动,在腾讯云服务巨头的协助下,花了一个礼拜天才修完,真的是一次美好的回忆。

版本信息

难题

想在空余情况下重新启动一下hbase释放出来一下运行内存,顺带改动一下yarn的一些配备,結果停用后,hbase站不起来了,错误报告便是hbase:namespace表is not online,master一直复位,实际错误报告:

- 15:41:59.313 [ProcExecTimeout] WARN org.apache.hadoop.hbase.master.assignment.AssignmentManager - STUCK Region-In-Transition rit=OPENING, location=node4,16020,1589648302672, table=real_time_data, region=74cac15d22e99800ad0ace14c9ed74d6

- 15:41:59.313 [ProcExecTimeout] WARN org.apache.hadoop.hbase.master.assignment.AssignmentManager - STUCK Region-In-Transition rit=OPENING, location=node3,16020,1596598630022, table=real_time_data, region=8e68891d5827c09974d81ad5d705c3b6

- 15:41:59.313 [ProcExecTimeout] WARN org.apache.hadoop.hbase.master.assignment.AssignmentManager - STUCK Region-In-Transition rit=OPENING, location=node3,16020,1596598630022, table=real_time_data, region=75c42d75e2556bf70ff527f2425e8509

- 15:41:59.313 [ProcExecTimeout] WARN org.apache.hadoop.hbase.master.assignment.AssignmentManager - STUCK Region-In-Transition rit=OPENING, location=node3,16020,1596598630022, table=real_time_data, region=2eee04869ac2c35984d4d22e6e9f2f31

- 15:42:08.264 [master/node3:16000] INFO org.apache.hadoop.hbase.client.RpcRetryingCallerImpl - Call exception, tries=15, retries=15, started=128887 ms ago, cancelled=false, msg=org.apache.hadoop.hbase.NotServingRegionException: hbase:namespace,,1558205786137.40562c48c9210c06813adce48773cb6a. is not online on node1,16020,1596957741742

- at org.apache.hadoop.hbase.regionserver.HRegionServer.getRegionByEncodedName(HRegionServer.java:3273)

- at org.apache.hadoop.hbase.regionserver.HRegionServer.getRegion(HRegionServer.java:3250)

- at org.apache.hadoop.hbase.regionserver.RSRpcServices.getRegion(RSRpcServices.java:1414)

- at org.apache.hadoop.hbase.regionserver.RSRpcServices.get(RSRpcServices.java:2446)

- at org.apache.hadoop.hbase.shaded.protobuf.generated.ClientProtos$ClientService$2.callBlockingMethod(ClientProtos.java:41998)

- at org.apache.hadoop.hbase.ipc.RpcServer.call(RpcServer.java:409)

- at org.apache.hadoop.hbase.ipc.CallRunner.run(CallRunner.java:131)

- at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:324)

- at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:304)

- , details=row 'default' on table 'hbase:namespace' at region=hbase:namespace,,1558205786137.40562c48c9210c06813adce48773cb6a., hostname=node1,16020,1589648239142, seqNum=55

- ... ...

- 15:44:58.229 [qtp1792826268-435] WARN org.eclipse.jetty.servlet.ServletHandler - /master-status

- org.apache.hadoop.hbase.PleaseHoldException: Master is initializing

- at org.apache.hadoop.hbase.master.HMaster.isInMaintenanceMode(HMaster.java:2827) ~[hbase-server-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.apache.hadoop.hbase.tmpl.master.MasterStatusTmplImpl.renderNoFlush(MasterStatusTmplImpl.java:271) ~[hbase-server-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.apache.hadoop.hbase.tmpl.master.MasterStatusTmpl.renderNoFlush(MasterStatusTmpl.java:389) ~[hbase-server-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.apache.hadoop.hbase.tmpl.master.MasterStatusTmpl.render(MasterStatusTmpl.java:380) ~[hbase-server-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.apache.hadoop.hbase.master.MasterStatusServlet.doGet(MasterStatusServlet.java:81) ~[hbase-server-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at javax.servlet.http.HttpServlet.service(HttpServlet.java:687) ~[javax.servlet-api-3.1.0.jar:3.1.0]

- at javax.servlet.http.HttpServlet.service(HttpServlet.java:790) ~[javax.servlet-api-3.1.0.jar:3.1.0]

- at org.eclipse.jetty.servlet.ServletHolder.handle(ServletHolder.java:848) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1772) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.apache.hadoop.hbase.http.lib.StaticUserWebFilter$StaticUserFilter.doFilter(StaticUserWebFilter.java:112) ~[hbase-http-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1759) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.apache.hadoop.hbase.http.ClickjackingPreventionFilter.doFilter(ClickjackingPreventionFilter.java:48) ~[hbase-http-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1759) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.apache.hadoop.hbase.http.HttpServer$QuotingInputFilter.doFilter(HttpServer.java:1374) ~[hbase-http-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1759) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.apache.hadoop.hbase.http.NoCacheFilter.doFilter(NoCacheFilter.java:49) ~[hbase-http-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1759) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.apache.hadoop.hbase.http.NoCacheFilter.doFilter(NoCacheFilter.java:49) ~[hbase-http-2.0.0.3.0.0.0-1634.jar:2.0.0.3.0.0.0-1634]

- at org.eclipse.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1759) ~[jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.servlet.ServletHandler.doHandle(ServletHandler.java:582) [jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.ScopedHandler.handle(ScopedHandler.java:143) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.security.SecurityHandler.handle(SecurityHandler.java:548) [jetty-security-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.session.SessionHandler.doHandle(SessionHandler.java:226) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.ContextHandler.doHandle(ContextHandler.java:1180) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.servlet.ServletHandler.doScope(ServletHandler.java:512) [jetty-servlet-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.session.SessionHandler.doScope(SessionHandler.java:185) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.ContextHandler.doScope(ContextHandler.java:1112) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.ScopedHandler.handle(ScopedHandler.java:141) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.HandlerCollection.handle(HandlerCollection.java:119) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:134) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.Server.handle(Server.java:534) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:320) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:251) [jetty-server-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:283) [jetty-io-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:108) [jetty-io-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.io.SelectChannelEndPoint$2.run(SelectChannelEndPoint.java:93) [jetty-io-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.util.thread.strategy.ExecuteProduceConsume.executeProduceConsume(ExecuteProduceConsume.java:303) [jetty-util-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.util.thread.strategy.ExecuteProduceConsume.produceConsume(ExecuteProduceConsume.java:148) [jetty-util-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.util.thread.strategy.ExecuteProduceConsume.run(ExecuteProduceConsume.java:136) [jetty-util-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:671) [jetty-util-9.3.19.v20170502.jar:9.3.19.v20170502]

- at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:589) [jetty-util-9.3.19.v20170502.jar:9.3.19.v20170502]

- at java.lang.Thread.run(Thread.java:745) [?:1.8.0_121]

基本实际操作

到这儿,我试着应用hbck指令查看更多并修补,发觉hbase2.0.0版本号hbck早已废料了修补的指令。

- -----------------------------------------------------------------------

- NOTE: As of HBase version 2.0, the hbck tool is significantly changed.

- In general, all Read-Only options are supported and can be be used

- safely. Most -fix/ -repair options are NOT supported. Please see usage

- below for details on which options are not supported.

- -----------------------------------------------------------------------

- 省去多个...

- 省去多个...

- 省去多个...

- NOTE: Following options are NOT supported as of HBase version 2.0 .

- UNSUPPORTED Metadata Repair options: (expert features, use with caution!)

- -fix Try to fix region assignments. This is for backwards compatiblity

- -fixAssignments Try to fix region assignments. Replaces the old -fix

- -fixMeta Try to fix meta problems. This assumes HDFS region info is good.

- -fixHdfsHoles Try to fix region holes in hdfs.

- -fixHdfsOrphans Try to fix region dirs with no .regioninfo file in hdfs

- -fixTableOrphans Try to fix table dirs with no .tableinfo file in hdfs (online mode only)

- -fixHdfsOverlaps Try to fix region overlaps in hdfs.

- -maxMerge <n> When fixing region overlaps, allow at most <n> regions to merge. (n=5 by default)

- -sidelineBigOverlaps When fixing region overlaps, allow to sideline big overlaps

- -maxOverlapsToSideline <n> When fixing region overlaps, allow at most <n> regions to sideline per group. (n=2 by default)

- -fixSplitParents Try to force offline split parents to be online.

- -removeParents Try to offline and sideline lingering parents and keep daughter regions.

- -fixEmptyMetaCells Try to fix hbase:meta entries not referencing any region (empty REGIONINFO_QUALIFIER rows)

- UNSUPPORTED Metadata Repair shortcuts

- -repair Shortcut for -fixAssignments -fixMeta -fixHdfsHoles -fixHdfsOrphans -fixHdfsOverlaps -fixVersionFile -sidelineBigOverlaps -fixReferenceFiles-fixHFileLinks

- -repairHoles Shortcut for -fixAssignments -fixMeta -fixHdfsHoles

随后,查看材料看到了hbck2,官方网详细地址:https://github.com/apache/hbase-operator-tools/tree/master/hbase-hbck2, 这一专用工具,原本认为把握住了救人的麦草,結果:

- ===================================================================

- HBCK2 Overview

- HBCK2 is currently a simple tool that does one thing at a time only.

- In hbase-2.x, the Master is the final arbiter of all state, so a general principal for most HBCK2 commands is that it asks the Master to effect all repair. This means a Master must be up before you can run HBCK2 commands.

- The HBCK2 implementation approach is to make use of an HbckService hosted on the Master. The Service publishes a few methods for the HBCK2 tool to pull on. Therefore, for HBCK2 commands relying on Master's HbckService facade, first thing HBCK2 does is poke the cluster to ensure the service is available. This will fail if the remote Server does not publish the Service or if the HbckService is lacking the requested method. For the latter case, if you can, update your cluster to obtain more fix facility.

- HBCK2 versions should be able to work across multiple hbase-2 releases. It will fail with a complaint if it is unable to run. There is no HbckService in versions of hbase before 2.0.3 and 2.1.1. HBCK2 will not work against these versions.

- Next we look first at how you 'find' issues in your running cluster followed by a section on how you 'fix' found problems.

- ===================================================================

wtm,多服。hbase2.0.0 ~ 2.0.2及其hbase2.1.0 ~ 2.1.0是不适合的,既不可以应用hbck,也不可以应用hbck2,这儿发生了断块。

解决方案

1. 修补master,让群集一切正常运行

因为现阶段master没法复位,群集无法启动,由于元数据分析表hbase:meta信息内容有毁坏,hbase:namespace表is not online,最先必须让hbase:namespace表发布,运行hbase群集再聊,不然事后的修补工作中都开展不上;随后修补这些表(这时心里是奔溃的,都提前准备重构建群集了)。

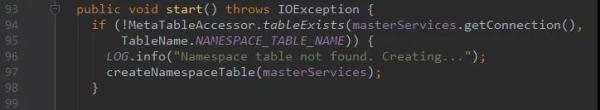

查询hbase源代码,发觉hbase元数据分析表hbase:namespace表要是没有会复建,TableNamespaceManager.java:

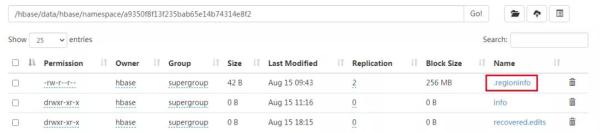

构思:备份数据hbase:namespace表hdfs数据信息,删掉hbase:namespace表,启动让其复建,随后将备份数据的数据信息bulkload进新创建的hbase:namespace表格中去。

删掉hbase:meta中hbase:namespace那一行数据信息,而且mv走hbase:namespace表相匹配的hdfs文件目录到临时性文件目录备份数据,那样等同于把hbase:namespace这一表删除了。

随后,重新启动hbase群集,namespace表会被复建,群集总算起来了。这时,hbase:namespace这张表里边储存的namespace仅有default这一默认设置的namespace,大家根据bulkload指令,把临时性文件目录里边的hfile文档移到hbase:namespace这张表里边,那样就复原了类名表。

2. 修补hbase表

很不易,hbase群集早已起来了,根据web ui发觉,这时里边的表全是空的,无法找到每一个region相匹配的hdfs数据库文件。

因为hbase中的hbase:meta表储存全部表的region分派等信息内容,如今因为群集出现异常终止,毁坏了hbase:meta表,应该是hbase:meta表有毁坏,造成hbase:namespace表无法找到相匹配分派的region。

构思:根据.regioninfo来修补hbase:meta表,参照blog:https://blog.csdn.net/xyzkenan/article/details/103476160

专用工具详细地址:https://github.com/DarkPhoenixs/hbase-meta-repair

终于解决了,虚惊一场,爱惜幸福的生活吧。此次出现异常,hbase群集无法启动,2个主要表现:

处理构思是: